Building Reliable Developer Environments for Agents

The transition from AI as an autocomplete utility to AI as an autonomous engineering orchestrator represents one of the most profound shifts in software development history. As detailed in our exploration of AI for Coding & Developer Tools, models are no longer simply suggesting the next line of code; they are actively reading repositories, identifying architectural flaws, writing comprehensive feature branches, and running test suites.

However, granting a probabilistic, non-deterministic reasoning engine read/write access to your production codebase introduces profound security and operational risks. The fundamental engineering challenge of 2026 is no longer building smarter AI models—it is building reliable, secure, and highly deterministic environments for those models to operate within.

The Threat of Hallucinated Execution

Large Language Models (LLMs) are exceptionally powerful at linguistic reasoning and code generation, but they are inherently probabilistic. They lack an intrinsic understanding of catastrophic failure.

If an autonomous coding agent misunderstands a prompt to "clean up the database migration files," it might autonomously execute a shell command that drops a critical production table. If an agent hallucinates a dependency, it might attempt to install a non-existent NPM package, ironically falling victim to dependency-confusion supply chain attacks. As we’ve analyzed in discussions regarding the Securing the AI Supply Chain, third-party dependencies represent a massive vulnerability vector.

To utilize agentic AI effectively, developers must construct environments that assume the AI will occasionally make catastrophic, logic-defying mistakes. The environment itself must catch the bullet.

Sandboxing: The Foundation of Reliable Execution

The initial step in building a reliable developer environment for an AI agent is strict hardware and software sandboxing.

An agentic model should never execute code directly on a developer’s local machine or a live production server. Instead, it must operate within ephemeral, heavily restricted execution containers—typically utilizing technologies like Docker, WebAssembly (Wasm), or specialized microVMs like Firecracker.

These ephemeral containers provide a complete, isolated Linux filesystem. They contain the specific compilers, runtimes, and dependencies required for the project.

- The Sandbox Constraint: The container has strict resource limits (CPU quotas, memory caps) to prevent an agent from accidentally writing an infinite loop that crashes the host machine.

- Network Isolation: The environment must be strictly firewalled. The agent can only make external network requests to explicitly whitelisted domains (e.g., pulling from a verified internal artifact registry). It cannot browse the open internet or exfiltrate data.

- Ephemeral State: Once the agent completes its task, or if it triggers a fatal error, the container is instantly destroyed and spun up fresh. No corrupted state persists.

The Model Context Protocol (MCP) in the Loop

While a sandbox protects the host system, the model still requires a secure, structured method to interact with the broader development workflow (like reading GitHub issues, commenting on Jira tickets, or interacting with AWS resources).

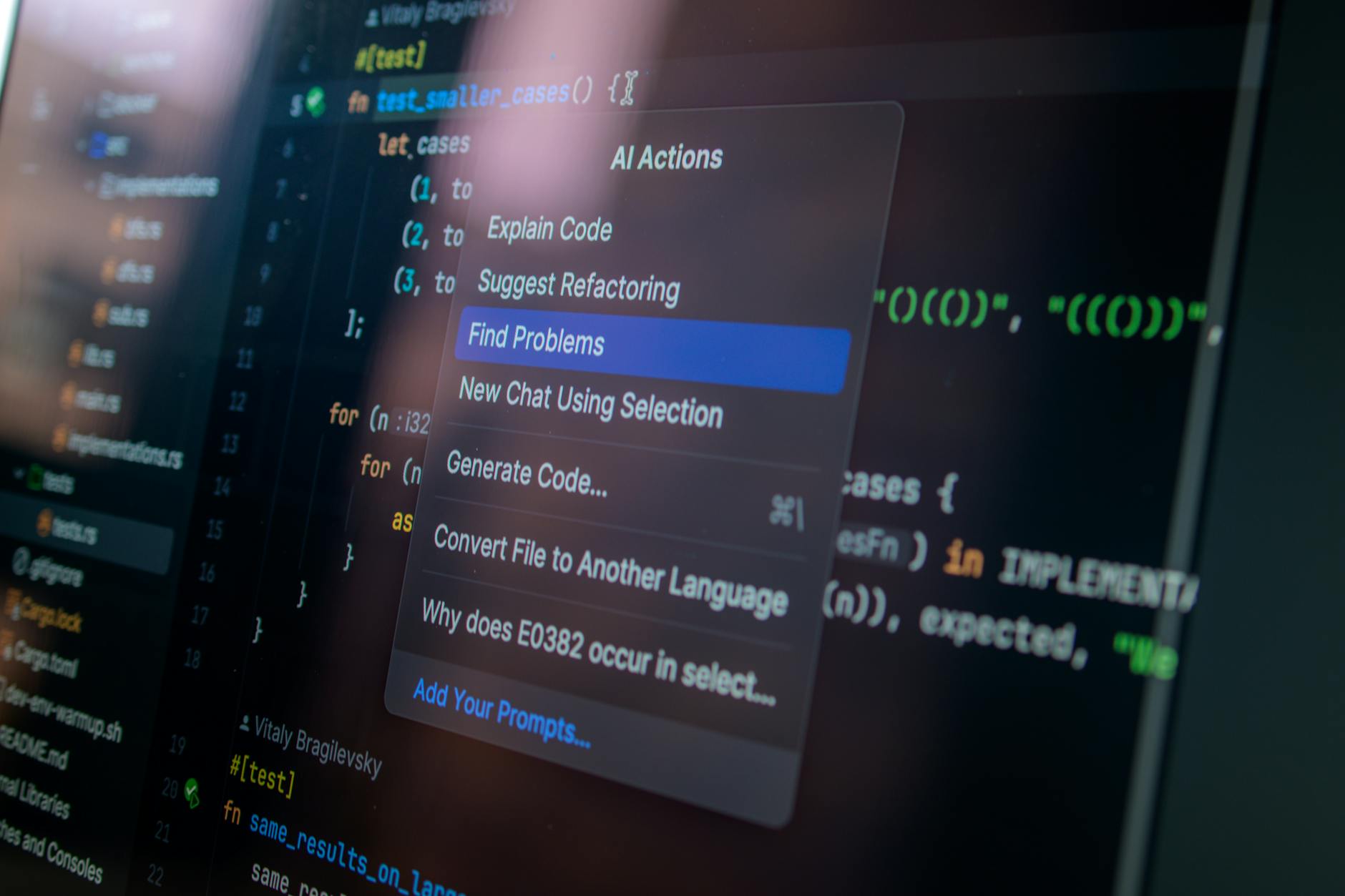

This is where the Model Context Protocol (MCP) acts as the critical bridging architecture. MCP allows developers to build specific, highly restricted "tools" that the AI can call from within its sandbox.

For example, an organization might build an MCP server that provides an execute_sql_query tool. The MCP layer acts as the absolute enforcer.

- It requires the agent to pass a valid database ID.

- It parses the generated SQL and programmatically blocks any

DROP,DELETE, orUPDATEcommands without human cryptographic signatures. - It executes the query on a read-replica database, never the primary production instance, and returns the strictly formatted JSON data to the model.

By leveraging MCP, the architecture isolates the reasoner from the executor. The model can think freely, but it can only act through the heavily guarded turnstiles of the MCP framework.

Deterministic Testing and Continuous Integration

A reliable agentic environment integrates natively with enterprise Continuous Integration / Continuous Deployment (CI/CD) pipelines.

When an AI agent finishes refactoring a complex module—a common use-case explored in Refactoring Legacy Code with Advanced AI—it does not merge its own code.

The agent pushes its changes to an isolated test branch. The CI pipeline initiates a deterministic build process:

- Syntax validation and static typing checks are enforced.

- The entire unit and integration test suite is executed.

- Code coverage reports are generated automatically.

- Security linters scan the generated code for hardcoded secrets or known vulnerability patterns (like cross-site scripting flaws).

If any of these deterministic checks fail, the pipeline pipes the raw error logs directly back into the AI agent’s context window via MCP. The agent reads the error, reasons about its mistake, writes a patch, and pushes again. This iterative loop continues until the code is mathematically verified.

Conclusion: The Era of Guardrailed Automation

The true value of autonomous AI development is unlocked only when human engineers trust the infrastructure. Trust is not derived from the intelligence of the LLM; trust is derived from the impenetrable nature of the sandbox, the strict limitations of the Model Context Protocol, and the uncompromising determinism of the CI/CD pipeline.

By building resilient environments that expect and gracefully handle AI failure, engineering teams can safely deploy fleets of autonomous developers, accelerating software delivery while maintaining absolute architectural integrity.

Written by MCP Registry team

The official blog of the Public MCP Registry, featuring insights on AI, Model Context Protocol, and the future of technology.